Awesome build, what about case fans? I am asking cause I am sure some people will be willing to just copy paste it! Also would you mind sharing cpu /gpu top/side temps under full load?

This particular case came with 3 large case fans preinstalled. I only had to move one to make space for the AIO for the GPU’s. Since the 2 AIO’s have 2 fans on them as well airflow seems to be decent enough.

If I stress test the CPU it reaches it’s +95°C limit, and at idle it’s at +50°. Maybe I shouldn’t have kept the pre-applied thermal paste on the cooler :). On the other hand any realistic load might not reach thermal-throttling range.

GPU’s stay below +55°C at full load, so those hold up fine.

I do have two temperature sensors in the case, but I haven’t been able to read them out yet (something with this new chipset still requiring some Linux driver support or something). Other devices stay at +50°C when the CPU is blasting though.

Stronk Brag ![]()

While we are dilligently working towards the next set of PR’s to the Livepeer AI project, the Stronk Orchestrator reached a new milestone last month, namely being the #1 fee earner in April 2024

At the request of other node operators, in April 2022 we started tracking & posting a monthly leaderboard in the Livepeer Discord payout channel, summarizing activity happening on Livepeers’ smart contracts and how fee earnings were distributed across Orchestrators.

Now two years later, the Stronk Orchestrator took home over 7% of all fees that month. No doubt caused by our excellent pricing strategy and participation in the AI alpha, this is the first time that our Orchestrator has managed to claim the #1 spot in fee earnings!

Due to our 0% fee cut, any ETH we earn immediately gets distributed to our Delegators.

Stay tuned for more updates. While the AI SPE team is working hard laying the foundations for robust AI inference at scale, we’re excited to unlock new use cases for the AI subnet.

The next post will feature an extensive list of changes made to the AI pipeline and what this means for developers who want to leverage these features.

![]() Stronk update

Stronk update

It has been a while since the last update. These are the things we’ve been working on:

First of all we’ve added a node in São Paulo, allowing us to service South America! ![]()

As one of the maintainers of MistServer (a free, public domain media server), we’ve been working on the following features:

- MistServer now has hardware-accelerated transcoding support.

- MistServer now has SDI support (raw video input/output), an important feature to make a bridge between traditional livestreaming and the broadcasting industry.

- Fixes and enhancements to HLS support.

Since MistServer is the media server of choice for Livepeer Catalyst, any feature added or bug squashed by extension also makes Livepeer’s decentralized media server more competent and opens up the way for new use cases.

Other stuff in the pipeline:

- StreamCrafter got some interface tweaks and fixes and will see some more love in the following weeks.

- AI R&D and bounties, like LoRa support , the text-to-video pipeline or node operator Grafana dashboards.

- Still working on adding (proper hardware accelerated) Intel support to Livepeer.

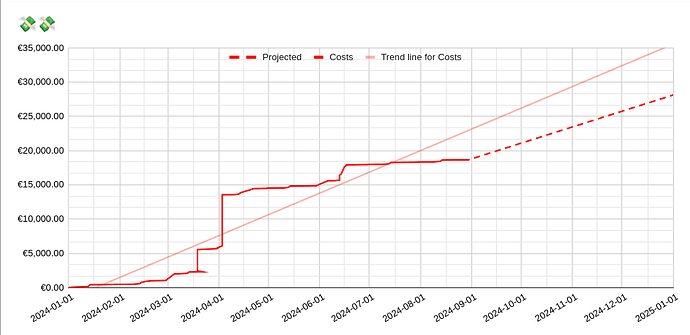

Costs of operating Stronk Tech

It might be interesting for Delegators to see what costs are associated with being a Livepeer node operator.

2024 has seen significant expenses so far. Of the ~€19K in costs, about ~€3K were running costs associated with running the node. Think of: websites, domains, GPU servers (outside EU) and other small business expenses.

The rest of the costs went into upgrading network equipment, adding an AI server and upgrading (transcoding) GPU’s.

We have projected between €25K to €35K costs end of year, as we still have additional GPU’s we want to add to the AI subnet, especially to service some of the more exclusive models like Flux or certain LLM’s.

Stay tuned for more updates. Stake to Stronk Tech to support our efforts and increase your ownership of the network. With the crypto market slowly inching towards an altcoin bullrun, you don’t want to wait too long with accumulating your LPT!

Stronk Livepeer & AI Update

Our submission for the ‘AI SPE Orchestrator Logo Generation Competition’

Hello Delegators and node operators! Here’s the latest on my Livepeer node and AI infrastructure:

Codebase Updates

The LoRa PRs have been merged and the txt2vid pipeline PR is currently in code review. These updates are paving the way for the next phase of improvements.

AI Dashboard Decoupling

With Livepeer AI becoming more feature-complete, we are decoupling our AI dashboard from our AI fork and instead run it on the public network. This enables more models, pipelines and frees up a GPU, which will be added to the AI subnet!

EU Transcoding Upgrade

The EU node has been underperforming due to overutilization during peak times. To address this, we are replacing the current 2x 4060 Ti cards with 3x 4000 ADA cards to triple our stream capacity.

EU Network Infrastructure Overhaul

We have completed a complete restructuring of our network infrastructure to ensure better performance, reliability, and security. This includes installing new high-performance switches and routers to maximize throughput and minimize latency, along with advanced intrusion detection systems to safeguard our operations. These upgrades bring our setup in line with what you would expect from a modern data center, providing a robust foundation for future growth and stability.

New AI Machine Setup

In addition to the existing AI machine equipped with 4090s (ideal for video diffusion due to their speed) a new machine is being set up. It features 2x 6000 ADA cards for LLM’s, Flux, or other high VRAM models, as well as 1x 4000 ADA (available once the AI dashboard is decoupled). This setup will significantly expand our capacity to handle diverse AI workloads.

Thanks for your support! I’m excited about these upgrades and the positive impact they’ll bring. A big shoutout to all my Delegators for making these kinds of investments possible.

Stronk hardware upgrade

New hardware

As a follow-up on the previous post the new hardware is now online! Built using proper server-grade components giving plenty of headroom to add extra cards, run RPC nodes and other fun stuff.

All of our other servers have been migrated to a rack case and installed in the new server cabinet as well.

The new machine - serving Flux models and virtual machines!

Specs:

Case: Silverstone RM600 Rack

Mobo: Supermicro MBD-H13SSL

CPU: AMD EPYC 9374F

Cooler: Silverstone XE360-SP5

RAM: 12x32GB Kingston Technology Renegade Pro EXPO

Root storage (mirrored): 2x Micron 5400 MAX 960 GB

VM storage (mirrored): 2x Micron 7450 PRO M.2 3,84 TB

GPU: NVIDIA RTX 6000 Ada 48 GB

GPU: NVIDIA RTX 6000 Ada 48 GB

PSU: Corsair AX1600i

Case fans: 4xNoctua NF-A14x25 G2

This gives us the following set of GPU’s connected to the Livepeer network:

Transcoding

- 1x GTX 1080 @ Singapore

- 1x GTX 1080 @ São Paulo

- 1x GTX 1080 Ti @ Boston

- 3x RTX 4000 ADA @ Leiden

AI

- 2x RTX 4090 @ Leiden

- 2x RTX 6000 ADA @ Leiden

- 1x RTX 4000 ADA @ Leiden (soon™️)

The cats were very helpful with transferring our hardware into new cases

The results of the network overhaul, new GPU’s and the addition of a São Paulo node has noticeably improved our leaderboard position already!

AI performance dashboard

In order to get a better overview into which models require supply, we have created a simple webapp which parses AI performance statistics (as provided by the cloud SPE).

This allows us to answer the following questions:

- Does a model/pipeline have enough (high performing) supply?

- Which nodes are participating in the AI subnet and to what extend?

The new dashboard can be found here.

Next up: stronk.rocks redesign

One of our oldest contributions is a ‘smart contract explorer’, which gives Orchestrators and Delegators insights into what is happening on the black box of smart contracts.

In preparation for the new backend API, we will be releasing a new skin for stronk.rocks (formerly known as nFrame). We’ve rewritten all components and are now ironing out the last kinks. Most notably, the Livepeer smart contract explorer now looks a lot more compact and the entire website should behave properly on mobile devices as well.

![]() Node Update: More Stake, More ETH, Lower Cut!

Node Update: More Stake, More ETH, Lower Cut!

The Stronk Orchestrator just crossed 800k LPT in stake, up +300k LPT recently!

Thanks to our active role in the AI subnet running the high-quality flux-dev model, the realtime video pipeline and the new autonomous agent pipeline from the Agent SPE, our ETH earnings have exploded (all going directly to our delegators)!

We’re on track to earn nearly 1.5 ETH in June, punching far above our weight relative to stake.

After over 600 days at a consistent 15% reward / 0% fee cut, we’re now adjusting:

Reward Cut: 11%

Fee Cut: 0% (unchanged)

This means it is now more profitable than ever to stake your LPT with Stronk Tech!

Next milestone:

![]() Drop reward cut to 8% when we hit 1M LPT staked.

Drop reward cut to 8% when we hit 1M LPT staked.